By DocLens AI

Pilot To Production – The Year of Contextual Reasoning At Scale

2025 was a defining year for DocLens.ai. It was the year ClaimLens™ crossed an important threshold – from a promising AI platform with successful pilot performance to a production-grade system capable of standing up to real-world insurance workloads, regulatory scrutiny, and enterprise expectations.

Our focus as an engineering organization was less about chasing novelty. It was to earn trust. Trust in the accuracy of AI-generated outputs. Trust in the reliability of large-scale document processing. Trust in security, compliance, and operational rigor. Ability to build a system that provides the ROI and business outcomes. And ultimately, trust from insurance carriers and defense law firms who depend on ClaimLens in live claim and case workflows.

This article outlines how we approached that mandate across product, AI infrastructure, security, and user experience.

Making ClaimLens™ Production-Ready

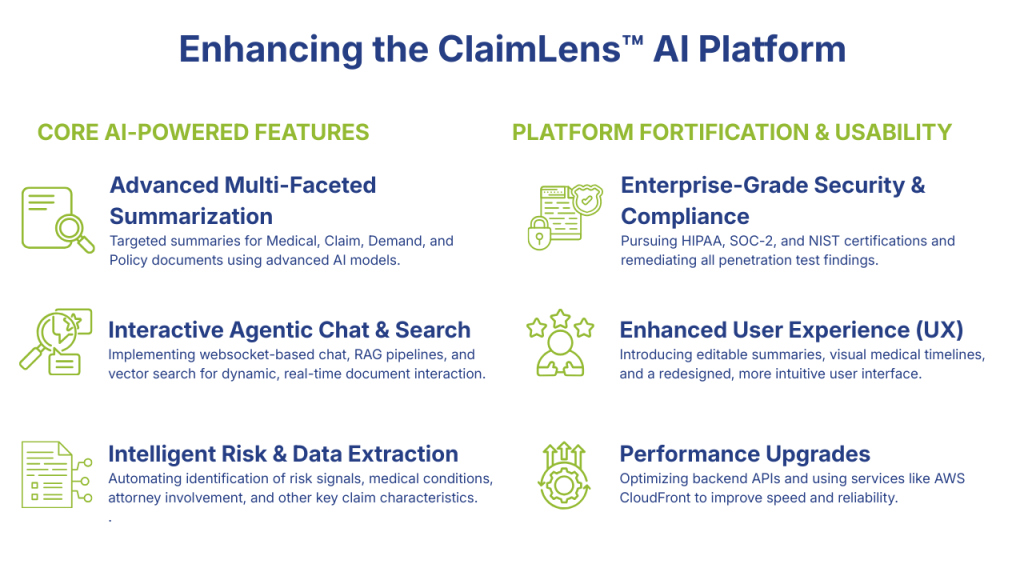

At the start of the year, ClaimLens™ already demonstrated strong technical potential. What it lacked was the operational maturity required for enterprise deployment. Throughout 2025, we concentrated on strengthening the platform across a few non-negotiable pillars:

- Accuracy and reliability of AI-generated Medical, Claim, Policy and Demand summaries

- Scalable extraction of insurance risk signals from deeply unstructured documents

- Security and governance aligned with SOC-2, HIPAA, and the NIST AI Risk Management Framework

- Performance, cost efficiency, and operational resilience at scale

By the end of the year, ClaimLens™ was no longer a system we were “validating.” It was a system customers were running in production.

Core Product Capabilities

Medical Summary: Precision at the Center

The Medical Summary is one of the most scrutinized outputs in ClaimLens™, and we treated it accordingly.

We made foundational changes to improve semantic accuracy and interpretability: reclassifying conditions by accident relevance and timing, resolving long-standing issues in date parsing and patient attribution, filtering medication false positives, and reorganizing lower-signal content into structured appendices. These were not cosmetic changes; they materially reduced downstream misinterpretation.

On the functionality front, we introduced Medical Necessity indicators, a timeline-driven view of care with provider-level citations, and an agentic chat interface integrated with Official Disability Guidelines. Under the hood, we re-architected summary generation to use asynchronous Bedrock calls and expanded AWS SQS adoption to improve reliability and throughput under load.

Claim and Demand Summaries: Signal Without Noise

For Claim and Demand summaries, the work centered on signal extraction and presentation discipline.

We tightened prompts, removed invalid or misleading citations, resolved inconsistencies in claimant and attorney counts, and eliminated sections that added volume but little value. Medicare lien detection was integrated directly into claim summaries to surface high-risk signals earlier in the workflow.

Demand summaries were restricted to demand packets only, enriched with AWS Comprehend Medical outputs via RAG, and delivered in structured HTML formats suitable for direct consumption by adjusters and legal teams. Citation integrity across downloads was also improved across all features.

Medical and Liability Risk Reports: Context Matters

Risk scoring only works if context is correct. Extraction alone does not tell us whether the content was found in a General Requirement or in context of the injured.

We refined liability and damage classification prompts, added medically meaningful ranges such as Glasgow Coma Scale thresholds, eliminated false positives caused by acronym collisions, and corrected default severity escalations that skewed risk outputs.

Attorney, law firm, and court detection logic was rebuilt using Chain-of-Thought prompting combined with RAG-based verification. Risk signals are now aggregated at the claim-file level, rather than being isolated artifacts of individual documents.

From a usability perspective, we added manual entity detection, contextual tabs, color-coded keywords, and live risk-score updates when annotations are modified or removed.

Agentic Chat and Search: Rebuilt, Not Retrofitted

Our conversational and search interfaces were rebuilt nearly end-to-end.

We migrated chat to a WebSocket-based architecture for real-time streaming, reduced cold-start latency through Lambda warmups, and introduced a deterministic multi-step workflow: QA-set resolution first, RAG fallback next, followed by targeted API calls.

Search was improved through layout-aware document processing, refined OpenSearch configurations, and upgraded embedding models. Importantly, we made the system embedding-model agnostic, allowing us to evolve retrieval quality without architectural churn.

Specialized Summaries and New Surfaces

Beyond core claims, we expanded into adjacent domains:

- PolicyLens, with structured policy summaries, endorsement-aware keywording, and citation-backed chat

- Medical Professional Liability summaries spanning claims, demands, and depositions

- New claim-level summaries including Return to Work, Treatment Plans, and Co-Morbidity analysis, primarily for customer pilots and demonstrations

These extensions validated that our underlying architecture could generalize beyond standard claims.

AI and Data Pipeline: Engineering for Failure Modes

AI systems fail quietly if you let them. We did not.

Throughout the year, we upgraded and evaluated multiple LLMs, including Anthropic Claude variants, task-specific Nova and open-source models, and multiple embedding strategies. Adversarial testing was conducted as part of NIST AI RMF alignment, and model behavior was continuously evaluated against human-verified ground truth.

On the data side, we implemented layout-based indexing with section headers preserved, added chunk-threshold enforcement for RAG, and ensured index lifecycle correctness when documents were deleted or replaced.

AWS Textract pipelines were hardened with improved retry logic, handwriting detection, and structured extraction across forms and tables. We filtered low-signal documents, flagged sparse pages, restricted email ingestion, and automated splitting and aggregation for oversized files.

Security, Compliance, and Governance: No Shortcuts

Security and compliance were treated as first-class engineering problems.

We successfully completed our second consecutive SOC-2 audit, initiated HIPAA certification with policy and recovery testing, and executed a comprehensive NIST AI RMF alignment effort spanning governance, risk identification, and continuous monitoring.

Penetration test findings were fully remediated, including rate limiting, CSP enforcement, token hardening, malware scanning for uploads, and resolution of Inspector and Security Hub findings.

We also implemented group-based access control, automated deactivation of inactive users, PII-tagged role enforcement, and systematic scrubbing of sensitive data.

Infrastructure and Operations: Quietly Doing the Hard Work

Significant effort went into infrastructure reliability and cost discipline.

We integrated RDS Proxy with improved retry handling, introduced CloudFront-backed downloads, upgraded PostgreSQL, tuned Lambda memory and concurrency, optimized Docker build caching, and lazy-loaded high-cost components like risk reports. These changes reduced both latency and AWS spend.

We also supported multiple customer and pilot environments with clean account setup and teardown processes while preserving data integrity.

UX and Frontend: Reducing Friction, Not Adding Features

Much of the UX work in 2025 was about removing friction.

We redesigned claim uploads, introduced editable summaries, improved citation popups with precise document and page references, aligned HTML and PDF outputs, and hardened the interface against user error during processing. Smaller changes (remembering last-visited files, sticky viewer controls, alphabetical sorting, better tooltips etc.) collectively improved daily usability for adjusters and reviewers.

Measuring What Matters

Finally, we grounded our progress in measurement.

All summaries in select customer accounts underwent human expert–guided evaluation against ground truth. The result was sustained accuracy above 95 percent at production scale, across claim files comprising tens of thousands of pages per month.

That outcome did not come from a single model upgrade. It came from disciplined engineering, relentless iteration, and a refusal to treat “mostly correct” as acceptable.

Looking Ahead

2025 was about foundations. 2026 will be about leverage.

ClaimLens™ is now a system we can confidently extend, optimize, and embed deeper into insurance workflows. The work ahead is still complex, but the platform beneath it is solid.

That was the goal.

Comments