What is MCP — and why is everyone reaching for it?

Every new wave of AI infrastructure brings its own cargo cult. Right now, that thing is MCP — the Model Context Protocol. Teams are wiring it in before they've asked the most basic question: does my agentic chat actually need it?

The Model Context Protocol is an open standard that defines how AI models connect to external tools, data sources, and services in a structured, composable way. Instead of hand-rolling integrations for every tool your LLM needs to call, MCP provides a shared interface — servers expose capabilities, and a compliant AI client can discover and use them dynamically.

Standardisation is a force multiplier — for cost as well as capability.

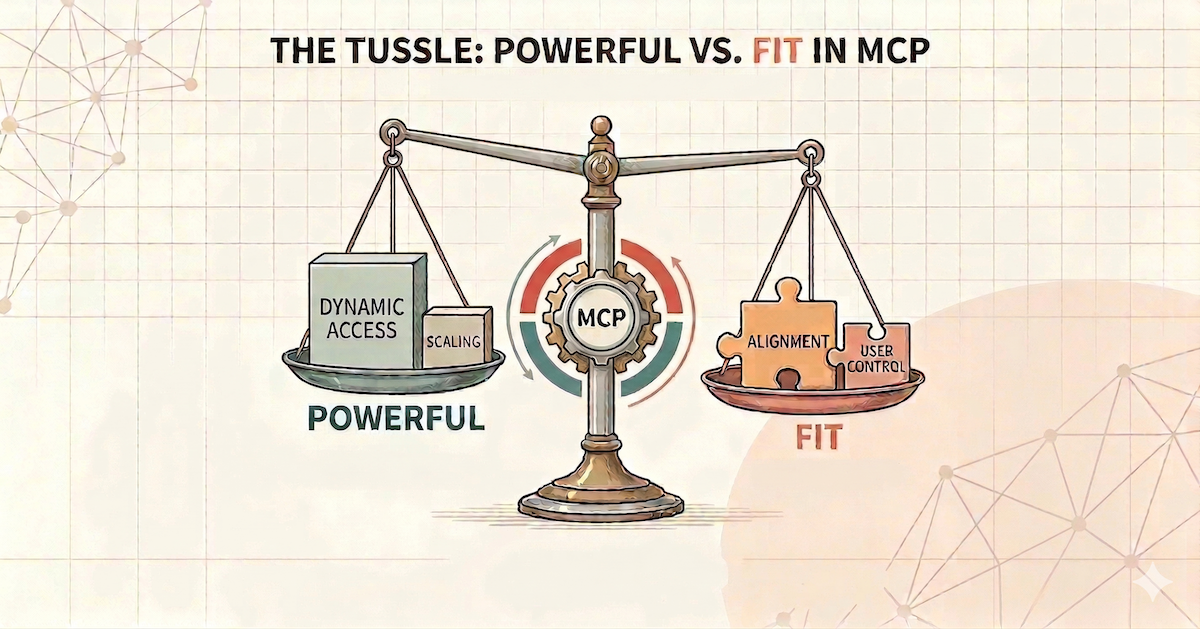

The protocol solves real problems at scale: uniform tool contracts, dynamic discovery, ecosystem reuse, and clean separation between model logic and tool execution. But it comes with real costs too. The question isn't whether MCP is good. It's whether your use case is the right fit for it — today, not theoretically.

Where MCP earns its keep — and where it doesn't

The benefits of MCP are real, but they accrue at scale. Below is an honest comparison of what you gain versus what you take on.

Another process to deploy, monitor, version, and secure. Debugging gets harder — you're now tracing serialisation issues and protocol edge cases on top of model behaviour. Every tool call includes transport overhead, which adds up in multi-step agentic flows. And adopting a protocol before you need it is a classic premature abstraction.

Small teams · Early-stage · Few toolsWhen multiple clients or teams need to share the same tool integrations, a shared MCP server eliminates duplication. When your tool set is large, dynamic, or permission-driven, the protocol's discovery model pays off. And when a community-maintained server already covers your integration, plugging in is faster than building from scratch.

Many tools · Multi-client · Dynamic accessThe pattern is consistent: MCP's overhead is constant, its benefit scales with usage. Until your usage justifies the overhead, the ledger is in the red.

Native function calling: often the right answer

Most AI providers — Anthropic included — expose native tool use directly in the API. You define a JSON schema for each tool, the model decides when to call it, and you handle execution in your own code. No additional process, no extra protocol.

No MCP server. No transport layer. No serialisation overhead. Your tools run inside your own application process.

For the vast majority of agentic chat applications, this is entirely sufficient. Your tools are known at build time, you control execution, debugging is straightforward, and latency is minimised. The complexity budget is zero until you genuinely need more.

Native tool use is not a compromise. It is often the correct, deliberate choice — and treating it as such is an engineering virtue, not a shortcut.

A framework for deciding

Before reaching for MCP, work through these five questions. The answers usually make the decision obvious.

- How many distinct tools does your agent need? Fewer than ten, known at build time — native handles this with ease. Dozens of tools that vary by context or user — MCP begins to earn its keep.

- Are multiple clients or teams consuming the same tools? Single app, single model — native is almost always enough. Three different AI apps in your org sharing the same integrations — a shared MCP server is worth it.

- Is your tool set dynamic or permission-driven? Different users getting different tools at runtime based on roles or subscription tiers — MCP's dynamic discovery becomes genuinely valuable.

- Do you have pre-existing MCP infrastructure? If your org already runs MCP servers, the marginal cost of connecting is low. Starting from scratch — weigh that infrastructure investment honestly.

- Is this production-grade today, or still evolving? Building something that will change significantly in the next 60 days — stay native. Lock in MCP once the shape of your tools has stabilised.

The table below summarises the most common scenarios:

| Scenario | Recommended |

|---|---|

| Single-app prototype, 3–5 tools, one team | Native |

| Internal tool with a stable, small tool set | Native |

| Early-stage product, tool set not yet settled | Native |

| Multiple AI clients sharing the same integrations | MCP |

| Tools vary dynamically by user or permission | MCP |

| Community MCP server already covers your integration | MCP |

| 20+ tools across multiple domains | MCP |

| Latency-sensitive chat with tight SLAs | Evaluate carefully |

The DocLens perspective

Our platform applies AI to complex, unstructured legal and risk data — searching corpora, extracting clauses, cross-referencing regulation databases, flagging risk patterns. You'd think we'd be all-in on MCP.

In practice, several of our core pipelines use native function calling. The tool set is well-defined, the execution environment is ours, and we need tight control over latency and error handling in production. We periodically ask ourselves the five questions above and are open to using MCP when the benefits genuinely outweigh the costs — but we don't let the protocol's appeal substitute for that honest evaluation.

The lesson is simple: use the right tool for the actual job, not the most impressive-sounding tool for the theoretical job. Architecture decisions should be driven by present requirements and near-term trajectory — not by what seems elegant in the abstract.

We are constantly evaluating. The moment we decide that we need to use MCP we will do so... and you will definitely see us blog about it 🙂

Don't let hype drive your architecture. The simpler thing is usually the better thing — and choosing simplicity deliberately is an engineering virtue.

Comments